Recently I was invited to provide a post for the Content Marketing Institute’s blog. CMI is the brainchild of Joe Pulizzi, a colleague and friend and self-proclaimed ‘poster child’ for Content Marketing. Joe and I collaborated on “Engagement: Understanding It, Achieving It, Measuring It,” a whitepaper/e-book published earlier this year. His vision with CMI is to help marketers (particularly B-to-B marketers) with the how-to’s of content marketing.

Charged with providing additional insight on the measurement of content marketing, I pondered my post, and kept coming back to what, deep down, we all ask ourselves about any marketing communication:

Does it really work?

Now, back in my days as an undergraduate, my question was about advertising specifically: Does advertising really work? Can we prove it?

And I recalled the adages of some historical figures who reportedly said:

‘I know half my advertising budget is wasted; I just wish I knew which half;” and

“There are three kinds of lies: lies, damned lies and statistics;” and even the

“X% of companies that continued to advertise through the Great Depression are still vibrant corporations today. The X% that cut back, you’ve likely never, nor will you, hear of.”

(Okay, so I have recall of a few pithy statements meant to back up the idea that advertising is important but tricky to measure, to prove that it works.)

But isn’t that the same methodology that supposedly supports advertising’s effectiveness – that is, recall? That you’ve “been reached” or you have “awareness?” That you then just know?

I feel many of us marketing-types out there believe advertising works because, well, we believe it does. We just know.

Now, see how that flies in the CFO’s office!

My post, here, talks about proving the efficacy of your content marketing efforts by facilitating an Experimental Design, to actually test that it works. To show the corner office (or your own conscience) that “when you plug X in, Y happens.”

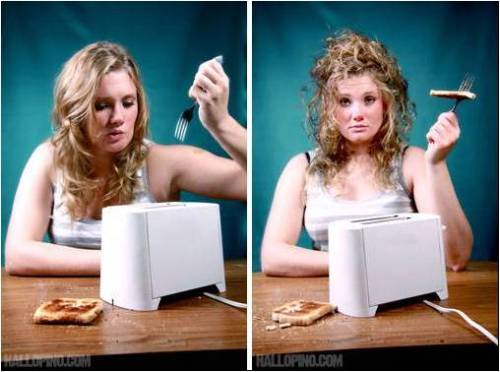

Photo credit: hallopino.com

But I think as much as demonstrating the how-to’s of measuring Content Marketing using Experimental Design, it is key to remember that any marcomm effort is a strategy to help accomplish a (marketing) goal. So, the independent variable here is the marcomm effort, and the dependent variable is the marketing effort. It helps me, at least, to de-mystify the “science” behind it all.

Viewed this way, it also helps avoid ‘over-reaching’ with your hypothesis. Rather than saying “we need to prove the ROI of Content Marketing” or “we need to measure the return on equity of Engagement,” your experiment should strive for a more direct correlation or cause: “prove that increasing the frequency of marketing content will lead to a greater proportion of qualified leads amongst all leads.”

DO measure your marcomm efforts.

DO set aside monies and time to do so.

DO so consistently and regularly.

Think of it as Experimental Design itself, with a hypothesis:

If I take the time and effort to measure my Engagement efforts, I will produce insights, and ultimately, results.”

And that works.